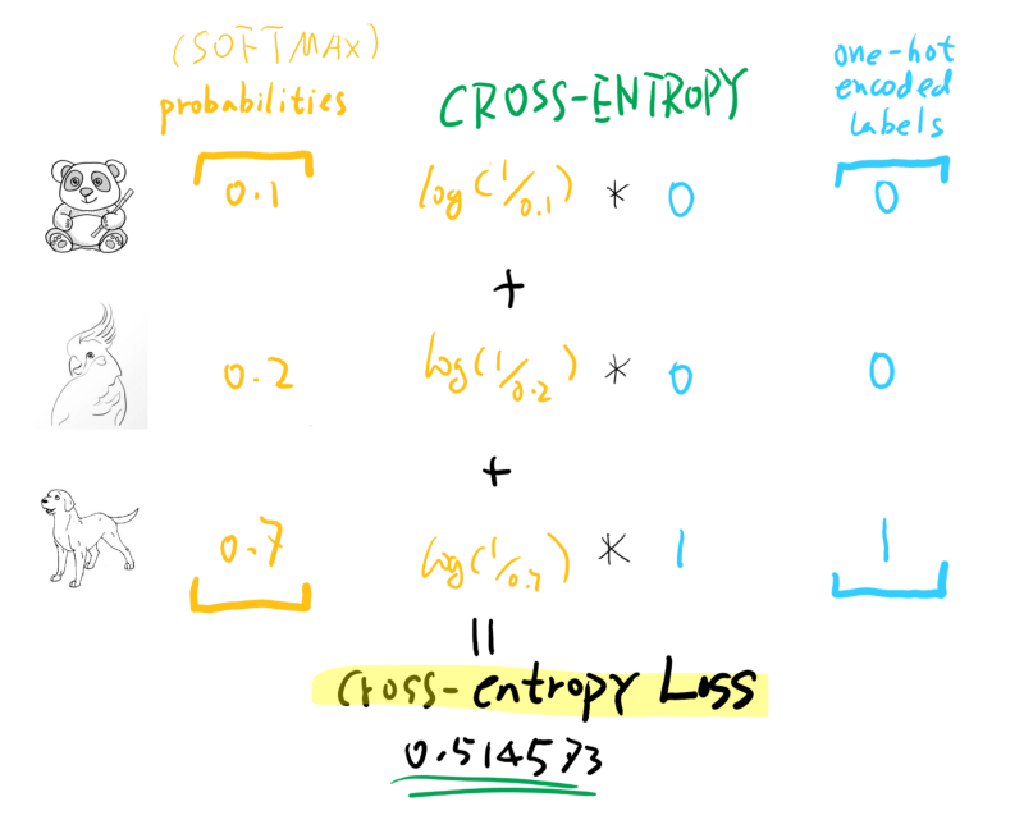

As longĪs the reconstructed signal of a given input is closer to its target thanĪny other target, the loss will remain zero. Is to get a decoded signal closer to its target than other targets. This loss can be used in lieu of a reconstruction loss, wherein the goal RefinerContrastiveLoss ( sim_thresh = 0.1, epsilon = 1e-16 ) ¶Ī contrastive loss between the decoder outputs of a given embedding size forward ( sample_list, model_output ) ¶Ĭalculates and returns the negative log likelihood. torch.nn.functional.crossentropy(input, target, weightNone, sizeaverageNone, ignoreindex- 100, reduceNone, reductionmean, labelsmoothing0. Model_output ( Dict) – Model output containing attention_supervision Sample_list ( SampleList) – SampleList containing attentions attribute. # MultiLoss works with config like below where each loss's params and # weights are defined losses : - type : multi params : - type : logit_bce weight : 0.3 params : forward ( sample_list, model_output, * args, ** kwargs ) ¶Ĭalculates and returns the multi loss. Is_multilabel – True if there are more than two labels, false otherwiseįorward ( sample_list, model_output ) ¶ Hard_mining – if true, select only the hardest examples (defined based on margin) Margin – parameters used in loss function calculation “Multi-similarity loss with general pair weighting for deep metric learning” ParametersĪlpha – parameters used in loss function calculationīeta – parameters used in loss function calculation MSLoss ( alpha = 50, beta = 2, margin = 0.5, hard_mining = True, is_multilabel = False ) ¶Ī Multi-Similarity loss between embeddings of similar and dissimilar Registered hooks while the latter silently ignores them. CombinedLoss ( weight_softmax ) ¶ forward ( sample_list, model_output ) ¶ĭefines the computation performed at every call. CaptionCrossEntropyLoss ¶ forward ( sample_list, model_output ) ¶Ĭalculates and returns the cross entropy loss for captions. Model_output ( Dict) – Model output containing scores attribute. Sample_list ( SampleList) – SampleList containing targets attribute. Binar圜rossEntropyLoss ¶ forward ( sample_list, model_output ) ¶Ĭalculates and returns the binary cross entropy. Instead of this since the former takes care of running the This function, one should call the Module instance afterwards Logit = (1-gt_tensor) * a + gt_tensor * bįocal_loss = - (1-logit) ** gamma * torch.log(logit)įocal loss is also used quite frequently so here it is.Although the recipe for forward pass needs to be defined within Using the functions defined above, def manual_focal_loss(pred_tensor, gt_tensor, gamma, epsilon = 1e-8): The epsilon value will be limiting the original logit value’s minimum value. The above binary cross entropy calculation will try to avoid any NaN occurrences due to excessively small logits when calculating torch.log which should return a very large negative number which may be too big to process resulting in NaN. If you are using torch 1.6, you can change refactor the logit_sanitation function with the updated torch.max function.

However, in 1.4 this feature is not yet supported and that is why I had to unsqueeze, concatenate and then apply torch.max in the above snippet. Loss = - ( (1- gt_tensor) * torch.log(a) + gt_tensor * torch.log(b))Ĭurrently, torch 1.6 is out there and according to the pytorch docs, the torch.max function can receive two tensors and return element-wise max values. Limit = torch.ones_like(unsqueezed_a) * min_valĭef manual_bce_loss(pred_tensor, gt_tensor, epsilon = 1e-8):Ī = logit_sanitation(1-pred_tensor, epsilon)ī = logit_sanitation(pred_tensor, epsilon)

At the moment, the code is written for torch 1.4 binary cross entropy loss # using pytorch 1.4

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed